Cameras and input extension

This article introduces the camera model, parameters, and other usage considerations for physical cameras, as well as how to extend input using custom cameras.

Input frame

The input frame is the fundamental data unit in AR, representing all relevant information captured from a camera or other data source in a single frame. An input frame typically includes:

- Raw image data (camera image)

- Camera parameters (such as intrinsic parameters)

- Timestamp

- Camera transformation matrix in world coordinates

- Tracking status

This information provides the spatiotemporal context data required by AR algorithms for localization, tracking, rendering, etc.

Physical camera

Cameras used in electronic devices typically consist of multiple lenses and mirrors. However, actual optical structures are generally not used to build camera models; instead, simplified models are employed.

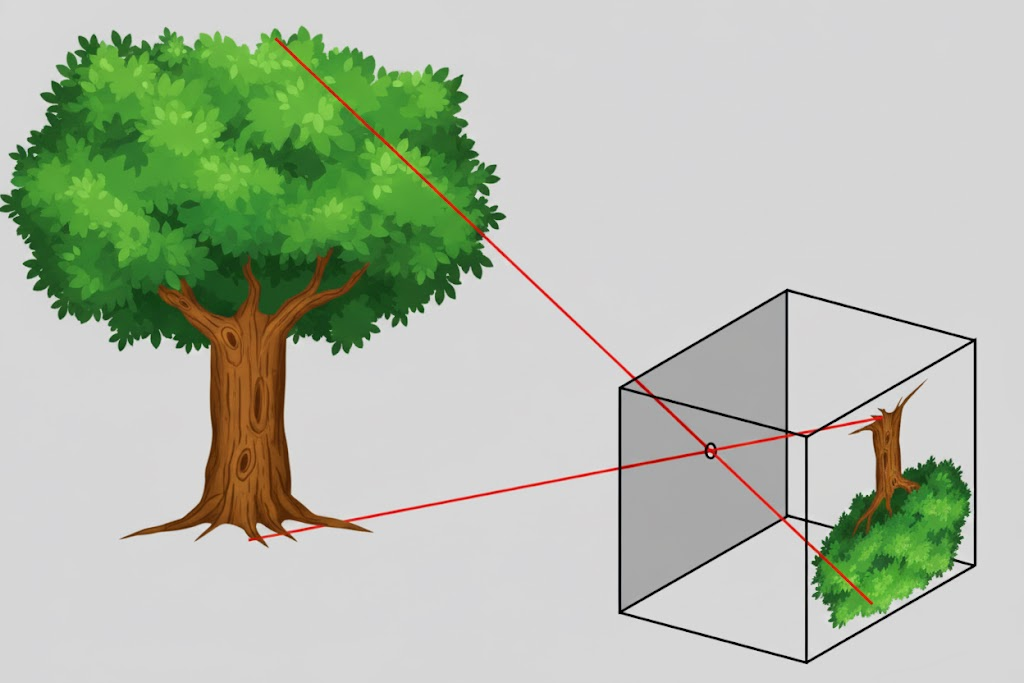

Pinhole camera model

This is the simplest commonly used model, where light passes through a small aperture to form a 180-degree rotated image. Camera output data typically corrects this inversion. Six parameters describe this model: pixel width/height \(w, h\), pixel focal lengths \(f_x, f_y\), and principal point pixel position \(c_x, c_y\). Note that scaling pixel width/height proportionally scales pixel focal lengths and principal point position while maintaining image position.

OpenCV camera model

Some cameras exhibit significant radial and tangential distortion. The OpenCV camera model extends the pinhole model by adding higher-order parameters to describe radial distortion (\(k_1, k_2, k_3, \cdots\)) and tangential distortion (\(p_1, p_2\)).

Note

Some trackers do not support the OpenCV camera model.

OpenCV fisheye camera model

Fisheye cameras use perspective projection to compress wide-angle content into a smaller imaging area. The OpenCV fisheye camera model lacks distortion correction and describes distortion using \(k_1, k_2, k_3, k_4, \cdots\) in addition to the six pinhole model parameters.

Note

Some trackers do not support the OpenCV fisheye camera model.

Camera orientation and image orientation

On mobile phones:

- When held horizontally (rotated 90 degrees counterclockwise from normal vertical hold) with landscape screen display, rear camera images match the real scene when displayed.

- Changing only screen display orientation without changing physical orientation doesn't alter the physical camera's output image direction.

- When held vertically with portrait screen display, rear camera images require 90-degree clockwise rotation to match the real scene.

- When screen orientation changes, rendered camera images require inverse rotation compensation to match the real scene.

Camera and image orientations are typically defined relative to the device's natural orientation:

- Mobile phones

- Android

Android defines a natural orientation (normal vertical hold), which is also the reference for IMU sensors. The camera output image's rotation angle relative to this orientation is obtainable as a camera parameter. - iOS

Although iOS doesn't explicitly define natural orientation, its IMU uses the same reference as Android.

- Android

- Tablets

Natural orientation varies: some use landscape hold, others use portrait hold like phones. - Glasses

Natural orientation is typically landscape hold.

Rendering camera images considers both camera orientation and screen orientation.

Camera types and flipping

Mobile phones generally have rear and front cameras. Front camera images require horizontal flipping before display to simulate a mirror effect. Without flipping, the image appears unnatural.

Input extension

EasyAR supports input extension via custom cameras. Custom cameras allow transmitting externally acquired input frames into the AR system for tracker usage. You can implement custom cameras to obtain image data yourself.

Platform-specific guides

Camera and input extension usage is platform-dependent. Refer to the following guides based on your target platform: