Introduction to EasyAR Mega

EasyAR Mega is an end-cloud collaborative spatial computing technology designed to create persistent, high-precision digital twin spaces for entire physical worlds (such as a city, campus, or large shopping mall). Through EasyAR Mega, your application can achieve large-scale, high-precision indoor/outdoor localization and virtual-real occlusion, delivering unprecedented spatial interaction experiences to users.

This chapter will briefly introduce EasyAR Mega's core working principles, expected effects, and platform adaptation guidelines from a developer's perspective.

Important

Non-developer users (such as product managers, operations, testers, etc.) should directly proceed to the Mega usage guide to learn about Mega services.

Before starting: ensure localization service readiness

Before integrating EasyAR Mega functionality into your application, one core prerequisite must be met: Mega cloud localization service is ready.

- On-site data collection completed

- Collect target area data using specified devices

- Use Mega Toolbox to collect EIF data for effect validation

- Mega Block mapping completed

- Localization service enabled and bound to application

- Add Block to Mega localization library in the development center

- Obtain valid App ID, API Key and correctly configure them in your project

Important

If the above steps are not completed, the application will fail to obtain localization results, manifesting as "AR content never triggers". Be sure to verify service availability before development.

Basic principles of Mega localization

Unlike traditional GNSS localization that relies on satellite signals, EasyAR Mega is based on advanced visual localization technology. By matching real-time image data captured by user devices with pre-built high-precision 3D data, it determines the user's 6DoF pose in the physical world. Based on this pose, the application can render and overlay virtual content at the correct physical location.

The workflow is as follows:

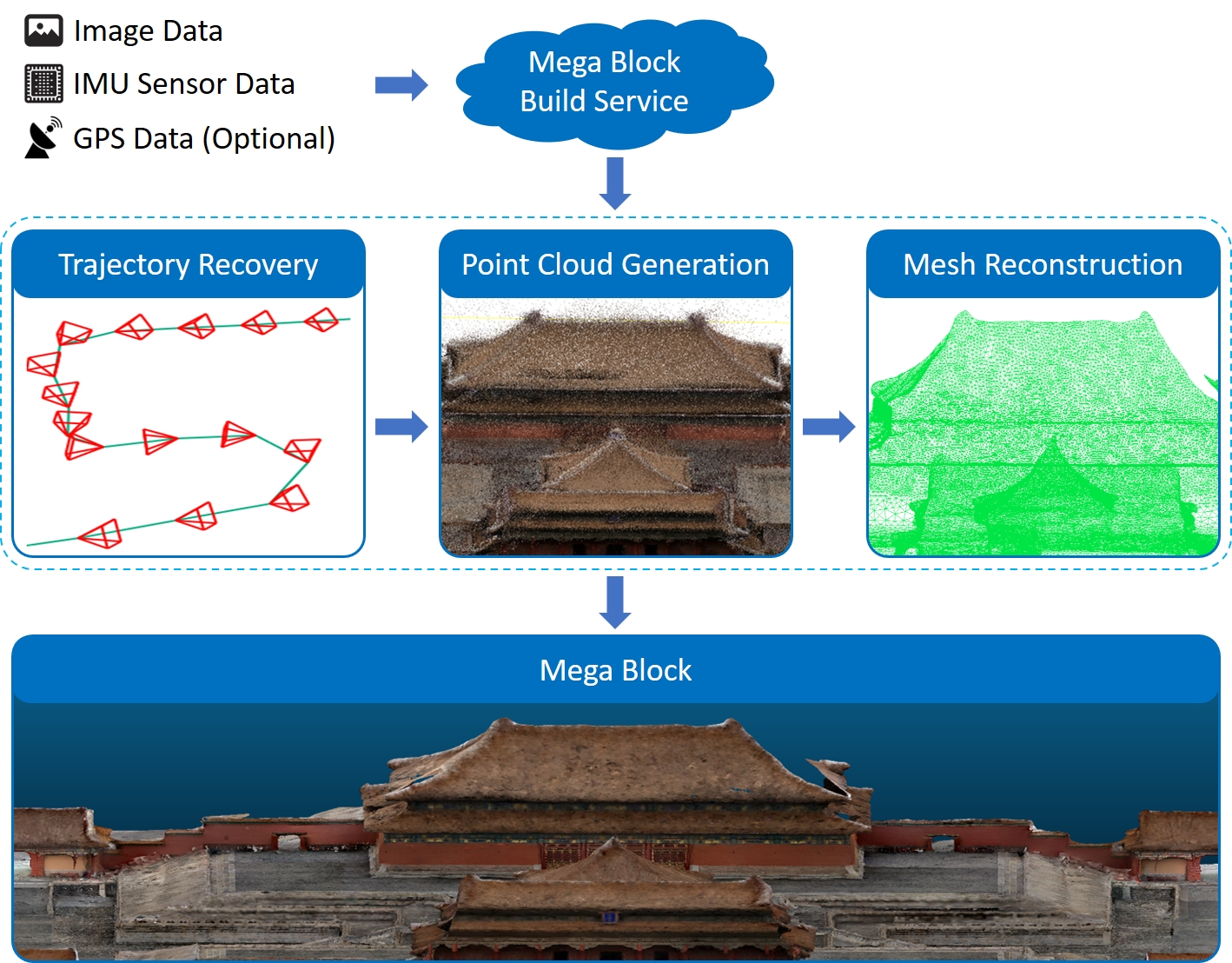

Map Construction:

- Use professional equipment (such as panoramic cameras) to collect data in the target area.

- Upload the collected data (such as .360 files) through the EasyAR mapping management backend.

- The cloud processing platform will compute the images from the collected data, using advanced AI algorithms to extract the visual features of the target area; fuse the images with sensor information like IMU to recover the motion trajectory during collection (i.e., the camera pose at each moment); and subsequently generate a 3D point cloud of the entire scene, constructing a dense mesh with texture mapping.

- Ultimately, the mapping system outputs a high-precision "Mega Block map" defined by EasyAR, containing 3D geometric information and visual features. This map is the cornerstone of Mega localization.

- Use professional equipment (such as panoramic cameras) to collect data in the target area.

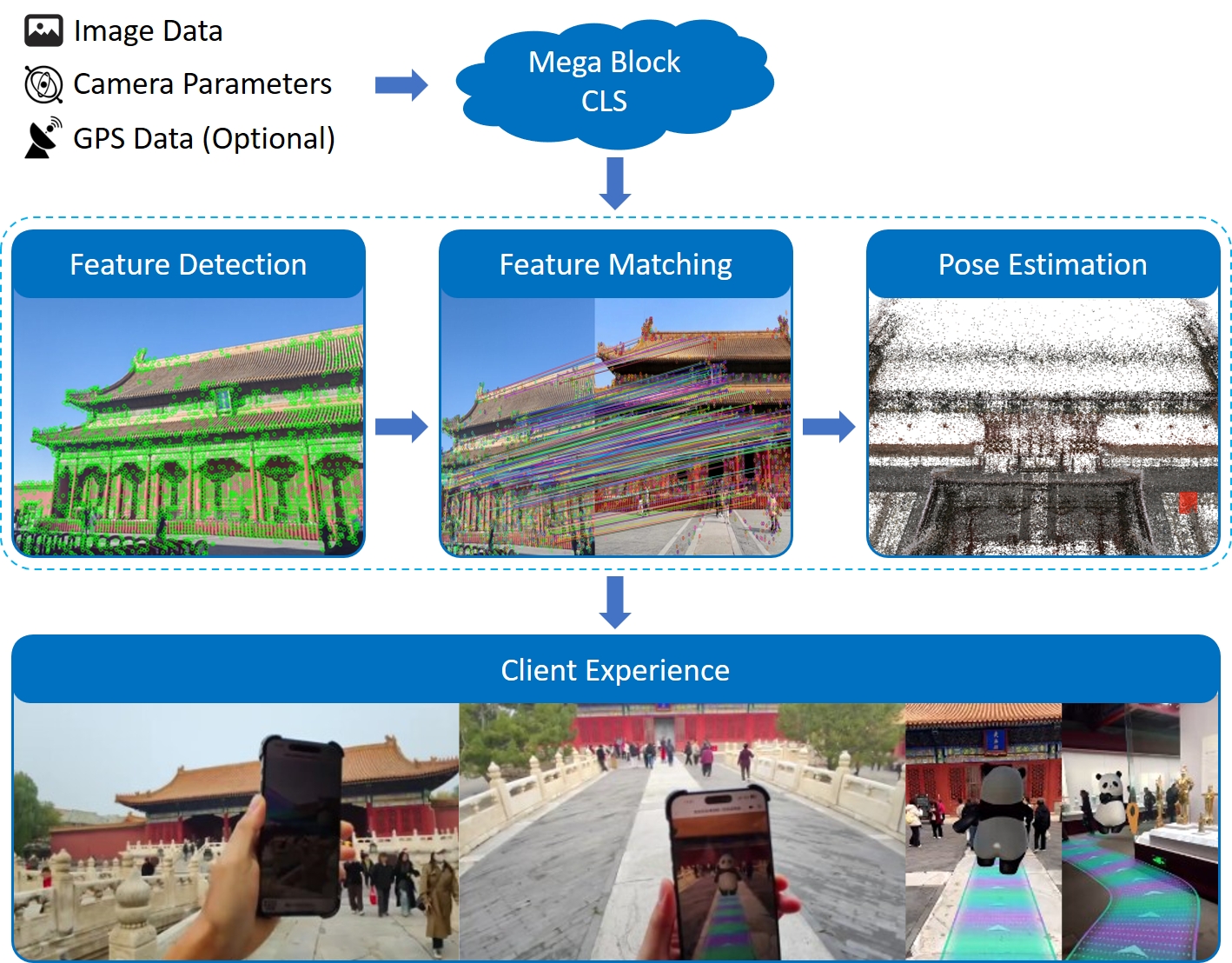

Real-time Localization:

- The user opens the application, the device camera captures real-time images of the user's field of view, and sends this data along with camera intrinsics, extrinsics (if available), and auxiliary information (if available, such as GNSS) to the Mega cloud localization service.

- The Mega cloud localization service extracts the visual features from the uploaded images and performs rapid comparison and matching against the Mega Block map in the localization database.

- Once a match is successful, the system can calculate the user's precise current pose (i.e., position and orientation) in the map with centimeter-level accuracy.

- At this point, the Mega cloud localization service sends the calculated pose down to the application end, where it is fused with the device's own SLAM system for tracking.

- Finally, the application end obtains a real-time localized and continuously tracked pose, enabling virtual content to be displayed at pre-anchored positions in the physical world and to update continuously as the person moves.

- The user opens the application, the device camera captures real-time images of the user's field of view, and sends this data along with camera intrinsics, extrinsics (if available), and auxiliary information (if available, such as GNSS) to the Mega cloud localization service.

Effects and expected results

After successfully integrating EasyAR Mega, your application can achieve the following impressive effects:

- Centimeter-level accuracy: Compared to GNSS's errors of several meters or even tens of meters, Mega positioning can provide sub-meter or even centimeter-level accuracy, allowing virtual content to be stably "anchored" to specific locations in the real world.

- Persistent space: Virtual content can be placed anywhere in the physical world, and all users see consistent content at the same location.

- Real occlusion: Leveraging Mega's spatial understanding capabilities, virtual objects can be occluded by real buildings or obstacles, greatly enhancing immersion.

- Operation in GNSS-denied areas: In areas with weak or no GNSS signals, such as indoors, underground parking lots, urban streets with tall buildings, or forested mountains, Mega still provides stable and reliable positioning services.

The video shows a typical example of using EasyAR Mega:

- High-precision, persistent spatial positioning allows virtual content to perfectly adhere to building surfaces, presenting stunning dynamic videos and meticulously designed large-scale 3D posters.

- Real occlusion enabled by spatial understanding makes fireworks blooming in the sky and digital effects blend harmoniously with the surrounding environment without dissonance.

- With advanced visual algorithms, the entire experience remains robust in complex, crowded environments and operates stably even at night.

Possible non-ideal situations

Slow positioning recognition

In crowded areas like large event venues, network latency and concurrent requests may cause significant delays in Mega cloud positioning. Users might need to wait a while before virtual content appears.

Environmental changes causing errors

If the physical environment undergoes drastic changes (e.g., construction barriers, seasonal vegetation changes), positioning accuracy may decrease or be lost. Mega maps require regular updates to adapt to environmental changes.

Drift during continuous experience

Mega positioning fuses with the device's native SLAM system for tracking and keeps the camera active. Prolonged operation may cause device CPU throttling, leading to frame stuttering, dropped frames, or tracking scale drift.

Tip

For more details on abnormal effects or failures, refer to the Troubleshooting section:

Extension suggestions

If you encounter non-programming development issues during EasyAR Mega integration, such as service failures, scene changes, business scaling, etc., please visit our Mega usage guide.

In this guide, you can find:

- Service creation: Learn how to create Mega services and simple troubleshooting.

- Effect optimization: Learn how to preview runtime effects, collect exception data, cold-start monitoring, etc.

- Persistent operation: Understand how to handle scene changes, business scaling, migration/upgrades, and other persistent operation needs.

- Business integration: Familiarize with usage of practical business data like navigation road networks.

- Reference resources: Operation manuals for practical tools like Mega Studio, Mega Toolbox.

Through this document, we hope you have gained a clear understanding of EasyAR Mega's working principles and effects. Next, you can begin preparing your first Mega project!

Platform-specific guidelines

EasyAR Mega's integration method is closely related to the platform. Please refer to the following guides based on your target platform: